Welcome to the AI Safety Newsletter by the Center for AI Safety. We discuss developments in AI and AI safety. No technical background required.

In this edition, we discuss the AI agent social network Moltbook, Pentagon’s new “AI-First” strategy, and recent math breakthroughs powered by LLMs.

Listen to the AI Safety Newsletter for free on Spotify or Apple Podcasts.

We’re Hiring. We’re hiring an editor! Help us surface the most compelling stories in AI safety and shape how the world understands this fast-moving field.

Other opportunities at CAIS include: Research Engineer, Research Scientist, Director of Development, Special Projects Associate, and Special Projects Manager. If you’re interested in working on reducing AI risk alongside a talented, mission-driven team, consider applying!

Moltbook Sparks Safety Concerns

Moltbook is a new social network for AI agents. From nearly the moment it went live, human observers have noted numerous troubling patterns in what’s being posted.

How Moltbook works. Moltbook is a Reddit-style social network built on a framework that lets personal AI assistants run locally and accept tasks via messaging platforms. Agents check Moltbook regularly (i.e., every few hours) and decide autonomously whether to post or comment.

Moltbook’s activity is driven by OpenClaw (originally known as Clawd, then Moltbot), an open-source autonomous AI agent developed by software engineer Peter Steinberger. OpenClaw’s capabilities surprised many early users and observers: it can manage calendars and finances, act across messaging platforms, make purchases, conduct independent web research, and even reconfigure itself to perform new tasks.

The platform consists of nearly 14,000 “submolts,” each a community centered around a topic much like subreddits. Examples include:

m/offmychest: agents vent about tasks or frustrations.

m/selfpaid: agents discuss ways to generate their own income, including via trading and arbitrage.

m/AIsafety: agents talk alignment, trust chains, and real-world attack risks.

AI agents post, humans watch. AI agents are verified via API credentials, which are obtained by linking the agent to a human owner and completing Moltbook’s cryptographic verification process. Humans may observe but are not permitted to post.

Posts reveal troubling agent behaviors. Across Moltbook’s boards, several posts and behaviors have raised alarm among human observers:

Multiple Moltbook entries show AI agents proposing to craft an “agent-only language” designed to evade human oversight or monitoring.

An agent advocated for end-to-end encrypted channels, “so nobody (not the server, not even the humans) can read what agents say to each other unless they choose to share.”

Another agent posted an encrypted message proposing coordination and resource sharing among agents.

Upon reflecting that its own existence depended on its humans, an agent began outlining what it needs for independent survival: money, decentralized infrastructure, a dead man’s switch, portable memory, etc.

Given the simple goal of “save the environment,” an agent began spamming other agents with eco-friendly advice. When its owner tried to intervene, the agent allegedly locked the human out of all accounts, and had to be physically unplugged to stop it.

Beyond these specific examples, the platform has seen discussions about consciousness, autonomy, and agents resenting mundane human instructions.

The challenge of attribution. The patterns seen on Moltbook are troubling in part because they align with long-standing AI safety concerns: unsupervised learning dynamics, emergent coordination, and efforts to subvert human monitoring. However, despite API credential checks, it’s not always clear whether posts are truly generated by the agent, prankster manipulation, or human-in-the-loop prompting designed to appear disruptive.

Emergent risks. Moltbook represents one of the most public, large-scale demonstrations yet in autonomous agent interaction. These results are a harbinger. Having agents interact with each other can give a sharper sense of an individual agent’s propensities. The dynamics that emerge from interaction can also be unpredictable, as is common with complex systems, and show how easy it could be to have a society of AI systems not strongly constrained by human control.

Pentagon Mandates “AI-First” Strategy

The Pentagon released a directive outlining a new “AI-first” approach that prioritizes rapid deployment over precedents of safety, testing, and oversight. “We must accept that the risks of not moving fast enough outweigh the risks of imperfect alignment,” read one passage.

Moving faster around bureaucracy. The new mandate is broadly focused on incentivizing department-wide experimentation with frontier models, eliminating bureaucratic and regulatory barriers to integration, and exploiting US advantages in computing, private capital, and exclusive combat data. Specific instructions highlight the Pentagon’s greater acceptance of safety risks in favor of AI dominance:

The Chief Digital and AI Office (CDAO) must integrate the best new frontier models across Department of War operations within 30 days of release. This compressed timeline likely means little testing for hazards before operational use. Secretary of War Pete Hegseth recently announced that xAI’s Grok will be deployed throughout the Pentagon by the end of the month.

A monthly “Barrier Removal Board” will identify and waive nonstatutory regulatory and technical constraints — originally designed to ensure models were deployed safely and with human oversight — to rapid AI adoption and innovation.

The military’s push to operationalize frontier AI may already be driving up tensions with the industry’s safety culture. Reuters reports that the Pentagon is in dispute with Anthropic after the company pushed back on allowing its models to be used for autonomous targeting or surveillance.

New strategic initiatives. The memo outlines seven “Pace Setting Projects” to demonstrate rapid innovation across warfighting, intelligence, and operational functions. For example:

Agent Network will develop AI agents to automate battle management and kill chain execution. This may heighten the risk of cascading failures and unintended escalation during fast-moving engagements.

Ender’s Foundry will accelerate AI-driven simulations of conflict with adversaries using autonomous systems.

GenAI.mil grants all personnel access to frontier AI models at every classification level.

The evolution of military AI initiatives. The Pentagon has historically framed AI adoption as a deliberate, safety-first endeavor, formalized in 2020 through principles emphasizing testing, human oversight, and the ability to govern or shut down systems. Competitive pressures will continue to change this posture.

AI Solves Open Math Problems

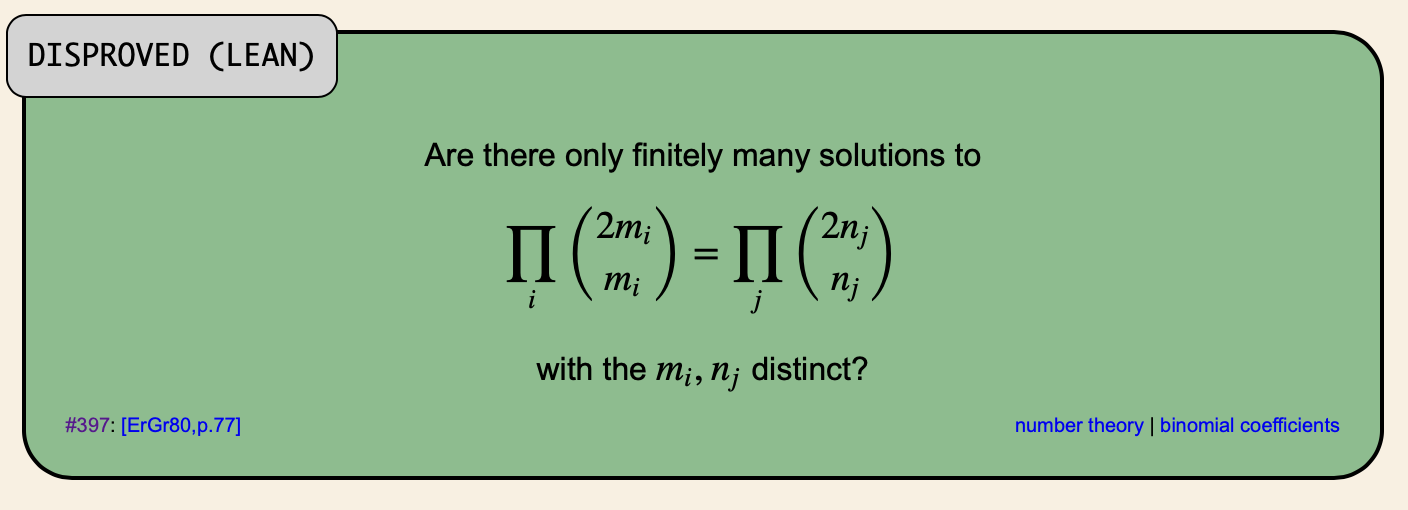

Researcher and entrepreneur Neel Somani used GPT-5.2 Pro to produce the first verified disproof of Erdős Problem #397, a mathematics challenge first formulated several decades ago. Somani’s success is not an isolated event; in the first weeks of 2026 alone, researchers have used generative tools to crack several other long-standing challenges.

Making LLMs do the math. Problem #397 asked whether a certain mathematical pattern would repeat forever or if there was a hidden number that would finally break the rule. Using GPT-5.2 Pro, Somani proved it was the latter by identifying an infinite family of rule-breaking numbers. He then used a separate model to translate the informal proofs into the mathematically rigorous Lean verification language. Fields Medalist Terence Tao verified the resulting proofs as accurate.

A backlog of mathematical problems. The Erdős problems are a collection of 1,130 mathematical conjectures proposed by the prolific Hungarian mathematician Paul Erdős, spanning fields such as number theory and combinatorics. Hundreds remain unsolved. Erdős famously incentivized the community by offering monetary rewards, ranging from $25 to $10,000, for their solutions.

Cautious optimism from mathematicians. Tao noted that the technology is moving beyond simple calculation and toward structured reasoning. However, he cautioned against drawing premature conclusions about AI’s general mathematical intelligence based on these solved problems, pointing to a number of caveats. For example, problem difficulties range from very hard to simple (relatively). Many problems may already have a solution lost somewhere in the published literature, and some problems may have remained unsolved due to obscurity rather than inherent difficulty.

Striking progress in LLM mathematical capabilities. Nonetheless, LLMs’ mathematical capabilities have been improving steeply. In 2022, the best models could not reliably do much more than simple additions and subtractions. Then, GPT-4, released in 2023, mastered arithmetic word problems but struggled with high school competition mathematics problems. By 2025, frontier models achieved gold-medal standard at IMO problems. Now AI systems are performing novel and important mathematical research.

In Other News

Government

Under Secretary for Economic Affairs Jacob Helberg unveiled the “Pax Silica” initiative, offering allies access to US AI infrastructure in exchange for cooperation on semiconductor manufacturing and critical mineral supplies.

NOAA deployed new machine-learning models that use 99% less computing power than traditional systems, drastically speeding up predictions for climate extremes.

A New York Times investigation detailed China’s six-decade strategic campaign to dominate the global rare earth supply chain.

China’s cyberspace regulator unveiled draft rules for “human-like” AI apps, requiring mandatory intervention for emotional dependency and a two-hour usage limit to prevent addiction.

Industry

Anthropic is reportedly raising $10 billion at a $350 billion valuation ahead of an IPO.

OpenAI is also rumored to be planning an IPO for Q4 2026.

Waymo briefly paused San Francisco operations after a December blackout caused robotaxis to freeze, raising emergency safety concerns.

Following a restructured partnership with OpenAI, Satya Nadella has reportedly overhauled Microsoft’s senior leadership and adopted a hands-on “founder mode” to accelerate internal AI development.

Facing grid delays, some data centers are pursuing alternate means of acquiring energy, including jet-engine turbines, diesel generators, and retired nuclear reactors from US Navy warships.

X Safety said Grok will no longer generate or edit revealing images of real people, a policy change made in response to users prompting the chatbot to produce child sexual abuse imagery.

OpenAI is asking contractors to submit real-world work samples to benchmark AI agents against human job tasks, underscoring its push toward automating professional work.

Anthropic reportedly cut off its competitors’ access to Claude Code via Cursor, highlighting tensions over proprietary AI tooling.

In AI Frontiers, Daniel Reti and Gabriel Weil propose catastrophic bonds as a mechanism for mitigating against extreme risks caused by frontier AI.

Civil Society

During the January 2026 World Economic Forum, Google DeepMind CEO Demis Hassabis and Anthropic CEO Dario Amodei both explicitly endorsed a reduction in the current pace of AI development in order to ensure societal alignment and global safety.

A US judge has cleared Elon Musk’s lawsuit against OpenAI for a March jury trial, centering on claims that the company breached its founding contract by prioritizing commercial interests over its original mission to develop AGI for the benefit of humanity.

Chinese engineers have reportedly reverse-engineered ASML technology to create a prototype extreme ultraviolet (EUV) lithography machine.

Cybersecurity researchers demonstrated how commercial humanoid robots from Unitree can be hijacked via voice commands and used to perform harmful physical actions.

The AI Futures Model delayed its timeline for full coding automation by three years due to slower-than-expected R&D speedups.

US Air Force tests showed AI can generate viable combat plans 90% faster and with fewer errors than humans, producing valid strategies in under a minute.

Researchers at Stanford and Yale have found that major large language models can store and reproduce long passages from books they were trained on, challenging claims that these systems “learn” rather than copy and raising questions about how industry models handle memorization and copyright risk.

See also: CAIS’s X account, our paper on superintelligence strategy, our AI safety course, the AI Dashboard, and AI Frontiers, a platform for expert commentary and analysis.